In the simplest terms, an Epoch represents one complete pass of your entire training dataset through the the model.

Imagine you are training an AI to recognize animals, and you have a folder of 1,000 images:

- If the model looks at all 1,000 images once: That’s 1 Epoch.

- If the model repeats that process 10 times: That’s 10 Epochs.

Why Do We Need More Than One pass?

You might think, “If the AI has seen the pictures once, why does it need to see them again?”

Think of it like studying for a massive history exam. Your textbook has 500 pages.

- One Epoch is like reading the book cover-to-cover once. You’ll get the gist, but you’ll probably forget the specific dates and names.

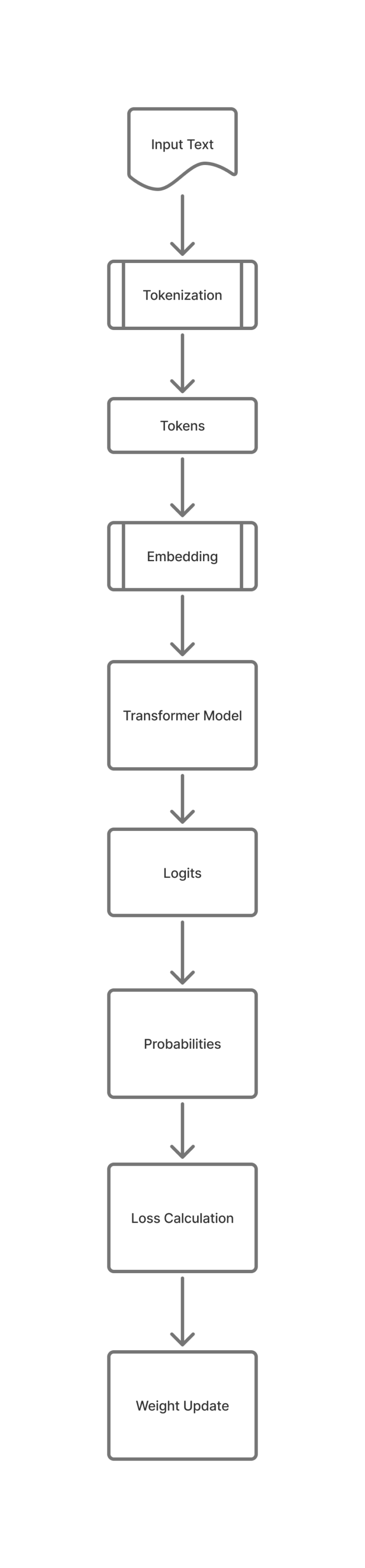

- Multiple Epochs are like revising. Each time the model goes through the data, it adjusts its “internal weights”—essentially its confidence levels—to improve its predictions. It’s refining its understanding of the “why” behind the data.

Training a model is a delicate balancing act. You want the AI to learn the patterns, but you don’t want it to turn into a mindless parrot.

1. Too Few Epochs (Underfitting)

The model is “lazy.” It hasn’t seen the data enough times to understand the underlying patterns. If you only read the first chapter of your history book, you’re going to fail the exam because you simply don’t have enough information.

2. Too Many Epochs (Overfitting)

This is where the model becomes a “memorizer” rather than a “learner.” It starts to memorize the specific pixels of your 1,000 images rather than learning what an “animal” actually looks like.

The Result: The AI might get 100% on its “practice test” (the training data) but fail miserably in the real world because it can’t apply its knowledge to a picture it hasn’t seen before.

Summary

An epoch is simply a repetition. Just like a student needs to review their notes several times to truly understand a subject, an AI needs multiple epochs to fine-tune its logic and make accurate predictions.

We will provide more related topics in coming posts.